What’s this site hosted on?

Contents

I feel the need - the need for speed…⌗

A site this popular needs some pretty serious kit to keep it running. You’re not going to be able to host this kind of traffic with a Geocities1 site.

Nope, you need real kit. Servers. A cluster. Orchestration! The Works.

Behold, the Snowgoons Server Environment:

Uhhh…⌗

WHAT?

Tell me more about the hardware⌗

So, what we have here is a four ‘server’ cluster (albeit only three are actually in the cluster, but more about that later…), comprised of enormously underpowered machines.

More seriously, this is my home Kubernetes cluster. It basically exists so I can play around with Kubernetes enough to be dangerous - clearly, nobody is going to run anything in production off this (well, apart from my little website, but nobody cares about that least of all me,) but it works as a sandpit for playing around with tools and techniques.

The hardware resources are provided by four Dell Optiplex “thin client” computers, bought for a song (around $25 each) from eBay - they’ve gone from a boring life on a bank clerk’s desk to a more exciting retirement as Internet servers. The specs are not hugely impressive; as bought they were:

| Item | Spec |

|---|---|

| Processor | Intel Atom 330 dual-core |

| Clock Speed | 1.6GHz |

| RAM | 1GB |

| Storage | 2GB Flash |

Now, it’s not actually impossible to get Kubernetes up and running on kit with this spec, but it is very difficult - finding a working host OS that will live with only 2GB of storage is hard, and then while getting K8s working with the Docker root mounted over NFS is possible, it’s a world of hurt. So after proving it was doable, I gave the machines a little upgrade. Fortunately, these little Dells take standard DDR2 DIMMs for RAM, and while the built in storage is a proprietary small-form-factor Flash chip, it’s attached to the motherboard with a standard SATA connector.

Some L-shaped SATA connectors, cheap OEM SSD drives, and a few DIMMs later I was able to upgrade the machines to 4GB of RAM each, and a couple of hundred GB of local storage. They’re still not exactly super-fast, but with this spec they can run Docker and Kubernetes pretty reliably.

Importantly, given they are in my living room, they are also basically silent running.

But why do this to yourself? Just use AWS, or Google Cloud, or…⌗

It’s not a bad question. In fairness, the last version of this site ran on EC2 with an RDS database behind it, and I’m a big fan of AWS generally (I have some side projects playing with Amazon’s Machine Learning tools that I’m sure I’ll write about someday.) But when it came to Kubernetes, I figured just spinning up GKE (say) would be a great way to use it, but a bad way to learn it.

I take an old fashioned view of learning a technology - break it, then learn how to mend it, and you can master it. And nothing breaks Kubernetes quite like being run on hardware that is manifestly unsuitable… You learn a lot about etcd consensus algorithms, or how impenetrable it is to find out what the hell is going wrong (normally, stuff timing out, on this platform) if you don’t have a good logging solution set up, that I’d probably never learn on GKE. Or at least, I’d only learn it after something catastrophic happened to a production system that I had no understanding of how to debug.

With this little toytown system, I can engineer catastrophic failure scenarios on a daily basis, and hopefully learn from them. And once I’ve learned how the magic works, I don’t mind using something like GKE for real production (I’m not a total masochist…)

OK then, so, Kubernetes?⌗

Yes, this little stack is running Kubernetes. Actually, only three of the nodes, and of them only one of them has the control plane (with the other two nodes being workers.) Not exactly ideal from a resilience perspective, but there are limits on what this kit is capable of delivering…

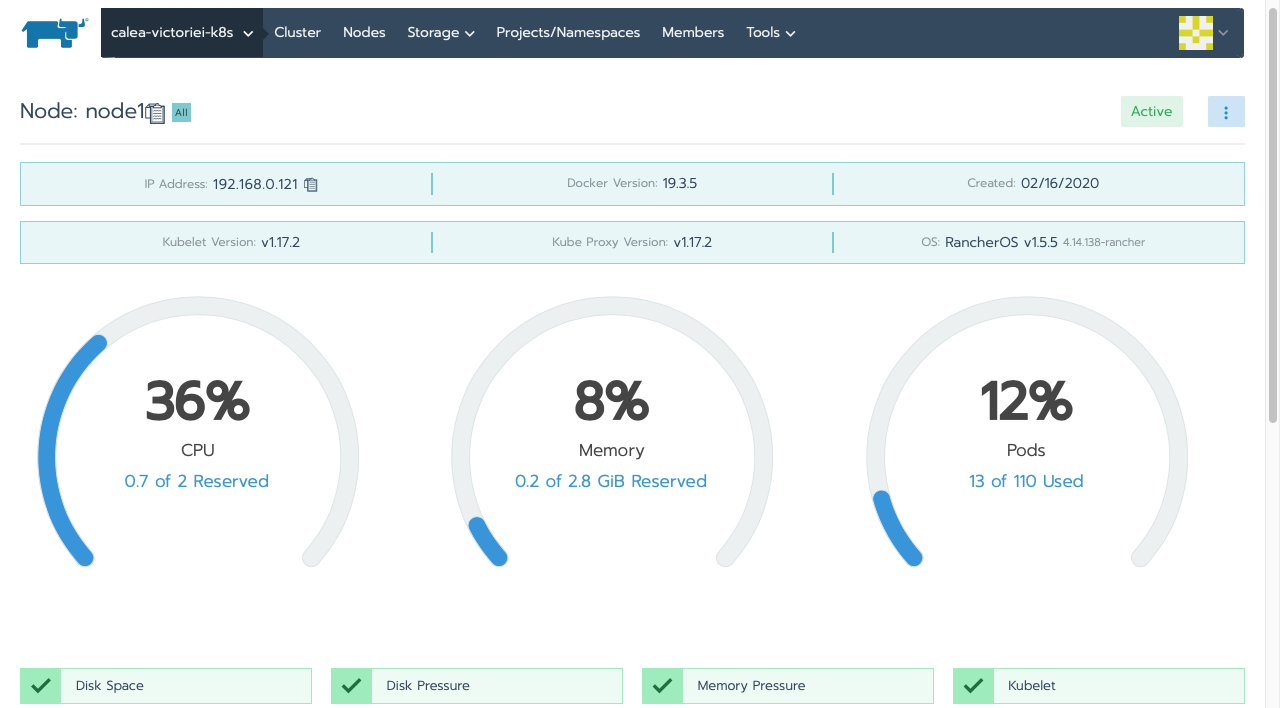

The fourth node is running Rancher. Rancher is a super nice tool for managing Kubernetes, and in this infrastructure I have a node dedicated to only Rancher - that means when Kubernetes itself is overloaded and its API isn’t responding, at least my management interface is still working. And work incredibly reliably it does - it’s super nice. I don’t actually use any of the Rancher “magic” to handle things like Istio, or logging configuration, I prefer to manage that myself using standard Kubernetes deployments.

If you look at that image, you’ll notice that Rancher is giving me one more important piece of the puzzle though - the host OS.

RancherOS - Lightweight, Brilliant⌗

I tried a lot of different host operating systems for this Kubernetes cluster. Many, many flavours of Linux - some standard off-the-shelf, some optimised for containers. Many of them simply refused to have anything to do with servers that were so under-powered, and others appeared to work but died painfully because they didn’t really like the Atom2 CPUs in these machines

One exception though has been 100% reliable from the get-go, RancherOS. It’s a really nice, lightweight distribution designed specifically for containers, and you don’t need to use Rancher to take advantage of it.

So, wrapping up⌗

The answer to the question “what is this site hosted on” is, some boxes from eBay, a super nice container OS, and this:

kubectl -n dmz describe pod snowgoons-httpd-665465984d-m5jcc

Name: snowgoons-httpd-665465984d-m5jcc

Namespace: dmz

Priority: 0

Node: node3/192.168.0.123

Start Time: Mon, 11 May 2020 14:08:34 +0300

Labels: app=httpd

pod-template-hash=665465984d

site=snowgoons

Annotations: cni.projectcalico.org/podIP: 10.42.2.180/32

Status: Running

IP: 10.42.2.180

IPs:

IP: 10.42.2.180

Controlled By: ReplicaSet/snowgoons-httpd-665465984d

-

If you don’t remember Geocities, you might be in the wrong place. But consider yourself lucky. ↩︎

-

Atom CPUs are mostly Amd64/x86 compatible, but they are missing a few of the Intel SSE instructions, and you’d be surprised how many bits of many Linux builds curl up and die when they don’t find those instructions. And custom-building a distro was not in scope for this project - I was doing that kind of thing when I was 143, it’s not interesting any more. ↩︎

-

Albeit with NetBSD - a much nicer operating system than Linux. But that battle was lost long ago. ↩︎